"Bidding" for Jobs?

Thoughts on an Auction-Based Approach to Costly Signalling in an AI-Saturated Labor Market

Recently, the problem of AI labour market frictions has resurfaced in the discourse, with respect to a business-technology influencer post instructing users on how Claude can be leveraged to apply to more than 50 jobs in 30 minutes. A few weeks later another appeared, this time citing a figure of more than 700 autonomously managed applications, custom tailored to the company and job listing, with a link to the open-source specifications. This turnover represents a kind of perverse Moore's Law for AI job application volume, and is a serious problem for both those hiring and those seeking to be hired. I have discussed AI source of labor market noise before in my previous post, Why AI Isn’t Taking Your Job Anytime Soon, but in that article I failed to propose a meaningful solution. Instead I focused on highlighting the detrimental effects that it has had on our labour market matching, particularly at the entry level.

Since then, I have joked that a solution to the AI-induced labour market signalling noise could be an auction-theoretic framework where prospective applicants bid compute tokens for the opportunity to interview. This sounds absurd, at first. The idea that a hopeful job applicant would actually pay a prospective employer to be considered for a job raises all kinds of issues with respect to efficiency and equity, but as I will explain, the theory behind it is sound.

The labor market currently lacks a meaningful cost of signalling, and that absence is destabilising matching. In this article I want to explore some of the considerations as to what this sort of platform might realistically look like, and whether a new mechanism for job applications offers a workable alternative that addresses AI noise in the application process, by internalising signalling costs and improving search and match efficiency.

This post is not a formal model, nor a fully worked-out policy/platform proposal. Instead, think of this as a kind of structured thought experiment: if AI has collapsed the cost of signalling in labor markets, intuitively, what happens if we reintroduce cost in a different form? What frictions does that resolve and what new ones is it expected to create?

The Problem

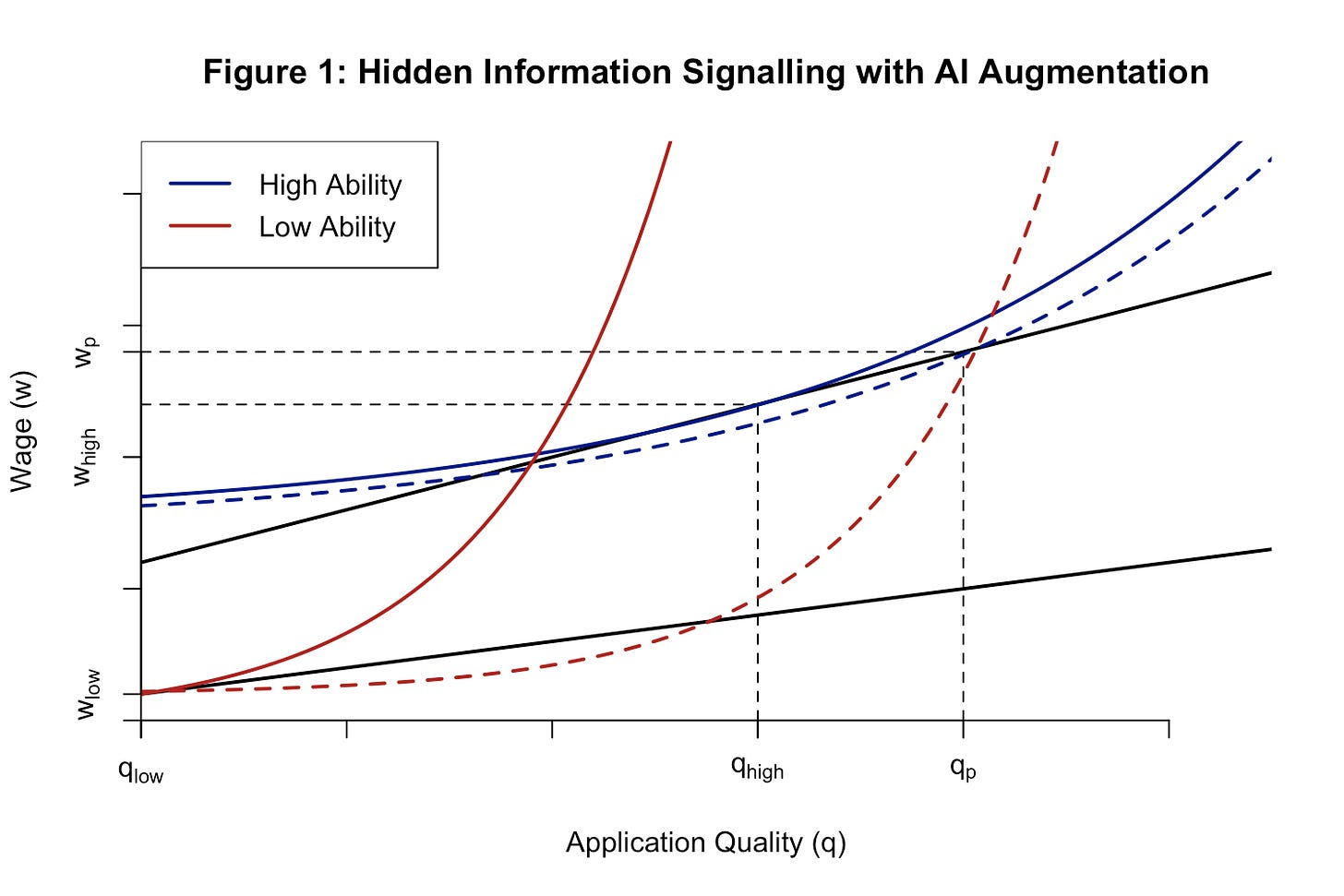

First, it’s important to reiterate precisely what the problem is. Generative AI has dramatically reduced the cost of producing polished job applications. High-quality resumes, cover letters, and take-home assessments can be generated at significantly lower cost, collapsing the informational content traditionally held by these signals. In classic labour market signalling models, costly signals effectively separate high- and low-quality candidates by the relative cost of effort for the respective individuals. When the cost of producing these signals approaches zero, you get a pooling equilibrium where everyone can produce a “high-quality” application, meaning these two kinds of candidates are no longer differentiable to a prospective employer that values them differently. AI-assisted application materials reduce the type-differential cost of producing polished observable signals. As those costs converge across candidate types, standard separating logic weakens and the market moves toward a pooling or at least less-informative semi-separating outcome.

Figure 1: The diagram illustrates a signalling model with asymmetric information, showing how AI augmentation reduces the cost of application material for low-ability workers, thereby shifting the labour market from a separating to a pooling equilibrium in the wage–quality space.

High-quality applications have thus exploded in volume. Firms spend more time screening, hiring slows, and strong candidates get lost in the large applicant pool, as employers can no longer reliably tell the difference between candidates. Hearing reports from hiring managers over the last few months, I want to update this theory slightly from my prior explanation. While application quality has increased, automated material generation has also increased the volume of bad applications (see 700 full automated AI applications). Whether it is obvious AI writing or something as simple as listing the wrong company and position in a cover letter, the compression of “good” applications has occurred alongside a flood of dreck that has made manual filtering untenable.

To tie this phenomenon to the excellent work done by Kyla Scanlon on the growing role of gambling in our collective consciousness and the economy more generally, job applications have effectively become a numbers game for those to enter the market. Instead of honing your application on a select few positions that you are eminently qualified for, the dominant strategy for a recent grad is to simply produce and send out applications as quickly as possible, in most cases with the help of AI tools. Sending out as many applications as possible becomes an equilibrium best response once the marginal cost of each additional application approaches zero. The expectation is that only a narrow sliver will be read, let alone considered, given this noisy information environment. Thus the job market has become an opaque and impersonal process that feels effectively random to the outside observer, not unlike gambling, where the best method of improving the odds is an exponentially increasing quantity of applications.

Further, this is where we see matching quality begin to deteriorate. An individual casting a wider net and applying to 100 instead of 10 jobs has consequences in terms of the desired allocation of skill and interest. An economist, for example, might be more suited for a job at a policy think tank, but by virtue of a limited number of those positions currently hiring, said economist will end up applying across industry, non-profit, government, and academic roles that they otherwise might not have considered. With the reduction in effort put into applying for each position and collapse of informational content, there is no greater likelihood that a person might receive their desired position over one of the others, and as quantity increases breadth dominates specificity.

However, aside from the volume approach, the current meta to reliably secure an interview is networking. If someone trusted recommends you, employers can assume that you’ve cleared some sort of quality threshold. But in this context, reputation serves as an imperfect solution, restricting the candidate pool to clusters of well-connected applicants. LinkedIn and other network-based platforms like it benefit most from a network-based hiring model like this and would not actually stand to gain from more efficient matching on merit. Job listings on these platforms are notably accompanied by a list of who you might know at a given institution and mutual connections, thus, we would not expect to see a platform like LinkedIn introduce the sort model I’m about to outline for improving this process.

Ultimately, this problem produces two imperfect pools of applicants for employers to choose from. On the one hand, you have the general applicant pool, which contains a wide range of applicants that might have some high-quality matches, but those end up hard to distinguish if not buried by low-quality or disinterested candidates. On the other, you have the in-network pool, which has a verified set of skills and meets a certain quality threshold, but may not be as well suited for the position that an employer is looking for.

With companies still competing against each other to hire, there is some difficulty in implementing a reliable solution to an in-house hiring system. AI interviewing and manual inputs for education and experience are costs that limit application volume, but risk deterring candidates by wasting their time. Meanwhile, the platforms listing the positions don’t necessarily have an incentive to produce better matching if they use a network-based business model, leaving the market in a sub-optimal matching equilibrium.

Framed more formally, this is a platform design problem under asymmetric information. Beyond the first round of basic filters, firms seek to allocate scarce interview slots to the highest-quality candidates, but applicants possess private information about their own quality type and fit. The challenge is to design a mechanism that induces applicants to not just credibly reveal this private information, but do so in a way that is differentiable to employers, despite the collapse of traditional signalling channels.

The relevant microeconomic problem is not simply that applications have become more numerous. It is that a previously informative signal has lost much of its separating power. In a standard signalling environment, observable application quality can help firms infer an applicant’s type when the cost of producing that signal is sufficiently higher for lower-type applicants than for higher-type applicants. Once generative AI compresses those costs, the observable action becomes less informative, pushing the market away from separation and toward pooling or weakly informative equilibrium. At that point, firms face a screening problem: scarce interview slots must be allocated under asymmetric information, but the usual observable signals no longer reliably distinguish types.

The platform-design question is thus, how can we address the problem of near-costless applications, creating a system where a given applicant can credibly demonstrate their qualification for the role to which they are applying, without some kind of in-network connection or socially wasteful cost to bypass the LLM noise?

The Solution

My proposed auction-theoretic solution started as a bit tongue-in-cheek, but it fundamentally addresses the underlying problem. If AI reduces the cost of signalling, there needs to be a new cost mechanism. As it stands, the cost of applications is volume or networking. You are no longer putting so much effort into a single application to make it stand out from the crowd. There is no way to make it stand out from the crowd. Thus, you simply have to submit application after application after application, or put in the effort to network and make personal connections within a firm. You are producing effectively the same quality application for every position you see, regardless of how much you actually want that position or even are a good match for the job, or simply relying on a personal referal to break through.

I appreciated Matt Darling’s recent article framing the current job market in terms of dating apps, as a sort of gamification of the application process, using similar reasoning to explain why no one’s being hired despite relatively low unemployment. This article emphasises that it’s already been gamified for a while, recent technology has just broken down the prevailing incentives. Now, what I’m hoping to do in this discussion is take this gamification to the next logical stage by asking, what rules can we implement to produce better outcomes? How can we restructure or introduce components to the application process that incentivises a stronger pool of applicants and less spam for prospective employers to sift through?

From my thesis that this outcome is the result of a low-effort pooling equilibrium and we must then solve for how we can effectively introduce a new set of costs that are not going to needlessly waste the time of an applicant? This is where I arrive at an auction theoretic framework for job applications.

The Applicant

Imagine a job platform where applicants receive an initial endowment of tokens. To apply for a job, you must bid on that job using said tokens. The higher the bid, the greater the likelihood that your application will be reviewed or moved forward into the interview stage. Bids below a certain threshold, say the top third of applicants, are discarded and the applicant loses the tokens offered. In this framework, interview slots are treated as scarce goods, applicants are bidders, where each applicant’s private valuation reflects the expected surplus from obtaining the job.

First, I propose an equal initial endowment to avoid many of the concerns that come to mind with an auction model.

If we were to introduce a pecuniary cost structure such that prospective applicants would simply pay real money, you immediately run into sorting problems. Applicants of means would be able to abide by essentially the same dominant strategy as before, casting a wide net, while more financially strained applicants would be significantly limited, reproducing many of the inequities that we might see in a network-based model.

Second, we want to avoid a time-based constraint. An example given by Ben Boehlert: you could hypothetically have applicants watch paint dry for an hour as a kind of arbitrary cost filter. This might actively deter some candidates, but the value of that hour might actually be worth more to a more qualified applicant. There’s a greater opportunity cost to spending that time watching paint dry, and thus you would see some discrimination against the better qualified candidates, which is a suboptimal outcome for a prospective employer, and further a needless time sink for the interested applicants.

Thus, we arrive at some equal endowment of externally value-less tokens across all participants.

Now, because tokens are scarce, applications face a real cost constraint and can no longer send out hundreds of applications. There is now an implicit cap on the quantity of applications you can submit. Rather, you must allocate a limited budget across the positions that you are most qualified for and that represent the greatest expected value to you as an individual.

Each applicant reviews the position requirements, responsibilities, location, and compensation and makes a determination of how much they value consideration for that job. Where under the current system, an application submission carries little informational content, simply that the listing could be fed into an LLM to produce a good enough cover letter, there is now a value attached, representing applicant interest and confidence that they would be a good fit for the position. Poor matches are now costly.

From an employer perspective, this now becomes a meaningful signal. An applicant who commits more tokens is signalling that this is one of the few positions that they are prioritising via an all-pay auction for employer attention. The materials themselves may not have changed, but there is now this additional dimension upon which an employer can recognise a signal in terms of quality and matching. In doing so, we assume that the interview stage remains a valid mechanism for final selection, and the current barrier is in applicant selection to interview.

Under standard the assumptions, equilibrium bids reflect expected value. Candidates who believe they are a strong fit for a role will rationally bid more than those who are only marginal matches. Thus, the spamming of a general application is no longer a dominant strategy. Limited to a fixed cap on applications, an applicant must now be more deliberate in your process and the jobs that they apply to.

This wouldn’t be that different than introducing a general cap within your platform of, say, 10 applications over a given period of time. You would achieve a similar outcome by capping the number of applications, because that again turns applications into an allocation problem. But where this diverges is that it defeats the efficacy of the auction as a ranking mechanism. Built into this system, you now have a kind of rate or numerical commitment that demonstrates just how much an individual cares about this position.

The appeal of this mechanism lies in its ability to restore incentive compatibility. Higher quality candidates, those with a greater probability of receiving offers, have a greater expected return from securing an interview and therefore a higher willingness to bid because they expect to perform well, value the role more, or believe they have a higher probability of success. Lower-quality candidates, facing lower expected returns, optimally bid less or exit altogether. In this respect, bids function as a screening device, reintroducing separation in a market that has otherwise collapsed into pooling, since higher expected match surplus leads stronger matches to bid more aggressively, and reach the final stages of the interview proccess.

The Employer

Thus far, I have discussed what the model demands of applicants. Now, it’s time to turn towards what a platform like this demands of employers.

An issue that’s been raised is whether or not this might introduce monopsony power on behalf of employers. Given the constraints of the platform, there is concern that there might be a world where an employer is able to exercise undue market power to diminish wages from this pool of hopeful employees, because there are now fewer firms receiving competitive applications.

This is a somewhat intuitive concern, in the sense that if you were able to send out a fixed number of applications, say 10, employers on the platform would no longer be competing with an unlimited number of other hiring firms. They would instead only be competing with 9 other prospective employers.

However, when considering this, we also need to think about how many applications they will be receiving. This hypothetical market still has the same number of total listings and the same number of total applicants. While application volumes are reduced there is the same level of market competition by virtue of an equivalent number of prospective employers and employees in the market.

The mechanism does not mechanically create monopsony. Whether it increases employer market power depends on how it changes effective labor supply elasticity at the firm level for example, through exclusivity, application caps, or concentration of applicant attention. The platform largely acts as a selection filter without necessarily contributing to the concentration of market power among either transacting party.

Another consideration is that employers may need some kind of cost mechanism introduced to keep their job listings truthful. AI is not just a problem in it’s use by applicants, there’s a recurring issue of “ghost jobs,” wherein an employer might list a position without intending to hire, as a signal of strong growth. AI further allows prospective employers to list marginal jobs with lower relative intent to hire at much lower cost. This produces a near inverse problem from what applicants face, where the reduced cost of job listings floods the market with posts containing weak or low informational-content signals that are not expected to reliably lead to employment opportunities.

Thus, a platform like this would require some form of guidance for the truthfulness and accuracy of employer listings, such that they are incentivised to hire for the listed positions and provide sufficient information such that they would be able to find a qualified applicant. Applicants cannot assess the value of applying unless firms accurately describes the relevant characteristics of the job, and a platform that disciplines applicants but not employers would only solve half the matching problem.

There is thus an informational requirement for the listings, the same as the application materials. In order for the system to work, the job listings need sufficient information such that an applicant can read the listing and make an assessment about their expected capacity to perform the job and the likelihood that they would, in fact, be qualified. While much of the labour market disruption that has been discussed is focused on applicant noise, reducing employer noise is just as pertinent to the function of a job platform like this. So how do we keep prospective employers in check on the platform?

Just as employers struggle to infer applicant quality from low-cost, AI-assisted applications, applicants struggle to infer job quality from low-cost, low-commitment listings. In both cases, the collapse of signalling degrades matching. A job platform that wants to improve allocative efficiency therefore has to solve two revelation problems at once: inducing applicants to reveal how strongly they value a role, and inducing employers to reveal strongly they value filling a role. This means listing what the position pays, what the hiring criteria are, and how likely the firm is to fill said position. In mechanism-design terms, the platform should aim for a system in which truthful disclosure by employers is incentive compatible, rather than merely encouraged by.

Offering a filtering service to cut down on the number of applications, selecting only high-propensity matches, would make a valuable proposition for employers to pay for the service, or rather produce some willingness-to-pay in order to post job listings. We therefore conclude the employer side may also benefit from a cost mechanism. Of course, while a person can only really perform one job, a firm may be looking to hire multiple people, so a cap doesn’t function nearly as well for firms as it might for applicants.

And thus, we return to a pecuniary cost mechanism. Simply charging an employer to list the position is incentive compatible in this platform structure, in that it induces a cost and signals to applicants that the firm is serious about hiring for this position. In having the platform charge for access, it constructs a mechanism that makes truthful disclosure by employers privately optimal. The objective is not to punish employers for uncertainty, but to reduce the deadweight loss created when applicants spend scarce attention on listings with low hiring probability or misleading attributes.

Again, breaking with other common platform structures, this model is not compatible with a visibility based cost structure and would instead be better designed as a flat fee. While the position should appear for qualified candidates, higher search visibility is not going to improve matching per se, as it might on platforms that seek to attract a higher volume of clicks.

A posting fee may screen out low-intent vacancies, but truthful revelation of job attributes generally requires more than entry pricing and could demand a verifiable disclosure rule or penalties tied to realised hiring behaviour.

Implementation and other Considerations

Thus we arrive at a basic market structure where a firm pays to list their job and applicants bid on interview consideration. It’s still very much an open question how best to recalibrate a system that has run up into a significant barrier in the context of artificial intelligence, and the proposal of an auction framework is just a first step.

Looking towards practical considerations, it’s hard to say what this kind of platform might actually look like. What is the optimal endowment or denomination of applications tokens? How many firms must post on the platform so job listings must be varied and differentiable, such that a prospective employee would have a distinct preference? Can such a mechanism satisfy the participation constraint where both applicants and firms must expect non-negative utility from entering the process? Nevertheless, there is a sound theory behind it.

In the proposed platform, interview consideration is a scarce object and applicants choose how much of a fixed token budget to allocate across vacancies. Bids should not be confused as direct measure of worker quality, they are endogenous signal of expected private surplus from a match. That surplus will be higher for applicants who are more qualified, better matched, more interested in the role, or more confident they can convert an interview into an offer. The mechanism therefore only works only under a additional observed condition. In practice bids must be positively correlated with the employer-relevance dimension the platform is trying to recover, such that match quality or probability of successful employment increases. If that correlation is weak, the platform may only sort by eagerness rather than by productivity.

In general, the framework would move towards incentive compatibility on the applicant side, restoring informative selection, though whether it improves welfare depends on how bids ultimately map to match quality, how costly bidding is in welfare terms, and whether the platform can meaningfully prevent strategic distortions in it’s incentive structure. This improves the informational environment on the applicant side, though it does not by itself guarantee efficiency or fairness.

The employer side cannot be treated as passive. If applications are costly but vacancy creation is cheap and weakly disciplined, the platform simply shifts the noise from applicant side of the market to the employer. Formally, applicants possess private information about fit and quality, while firms possess private information about job characteristics and hiring intent. A well-designed platform must therefore solve a two-sided revelation problem. Entry fees for firms can screen out some low-intent or speculative vacancies, but fees alone do not guarantee truthful disclosure of wages, duties, timelines, or probability of hire. To make employer revelation incentive compatible, the mechanism would likely need verifiable posting requirements, penalties for misrepresentation, or fee structures contingent on actual recruiting behaviour.

There are several extensions and revisions necessary to create a functioning platform, but this initial framework offers an interesting exercise in addressing information asymmetry in this specific information environment. I would be interested to see how a formal model might expand on this idea and present a potential path forward for applicants to credibly distinguish themselves as serious about a given job listing compared with their peers, and expunge some of the AI induced noise currently clogging job channels.

Very coincidentally, I had just tweeted about this when I got a notification about this post. Magic!

The idea I had shared was a bit simpler than what you've presented. You pay a fee for an account on a separate website from LinkedIn or Indeed, but rather than finding listings there, you just receive a finite number of keys to submit with applications. Employers can then use up these keys permanently by clicking a link in an application that takes them to the site. We can easily expand on the idea to be much like what you've suggested: you pay a fee to get an account, and can pay higher and higher fees for better and better keys, like a bronze key for jobs you kinda think you're a good fit for or a pricey platinum key for cases where you're dead certain you'd be a good hire.

Obviously this concept differs strongly in that it involves people spending real money rather than bidding with tokens. But if we could somehow implement a good verification system (so that people can only have 1 account), it's easy to switch to a token system. The advantage of this version is that you don't need to make LinkedIn 2 for it to work, you just need employers to understand the signal and trust it when it's included in an application.

My experience as an employer (and an employee) is that many people are delusional about the kind of jobs they are suited for, and even in this system many very poorly qualified people would use all their tokens for jobs they were completely unsuited for and would stand a very small chance of getting.